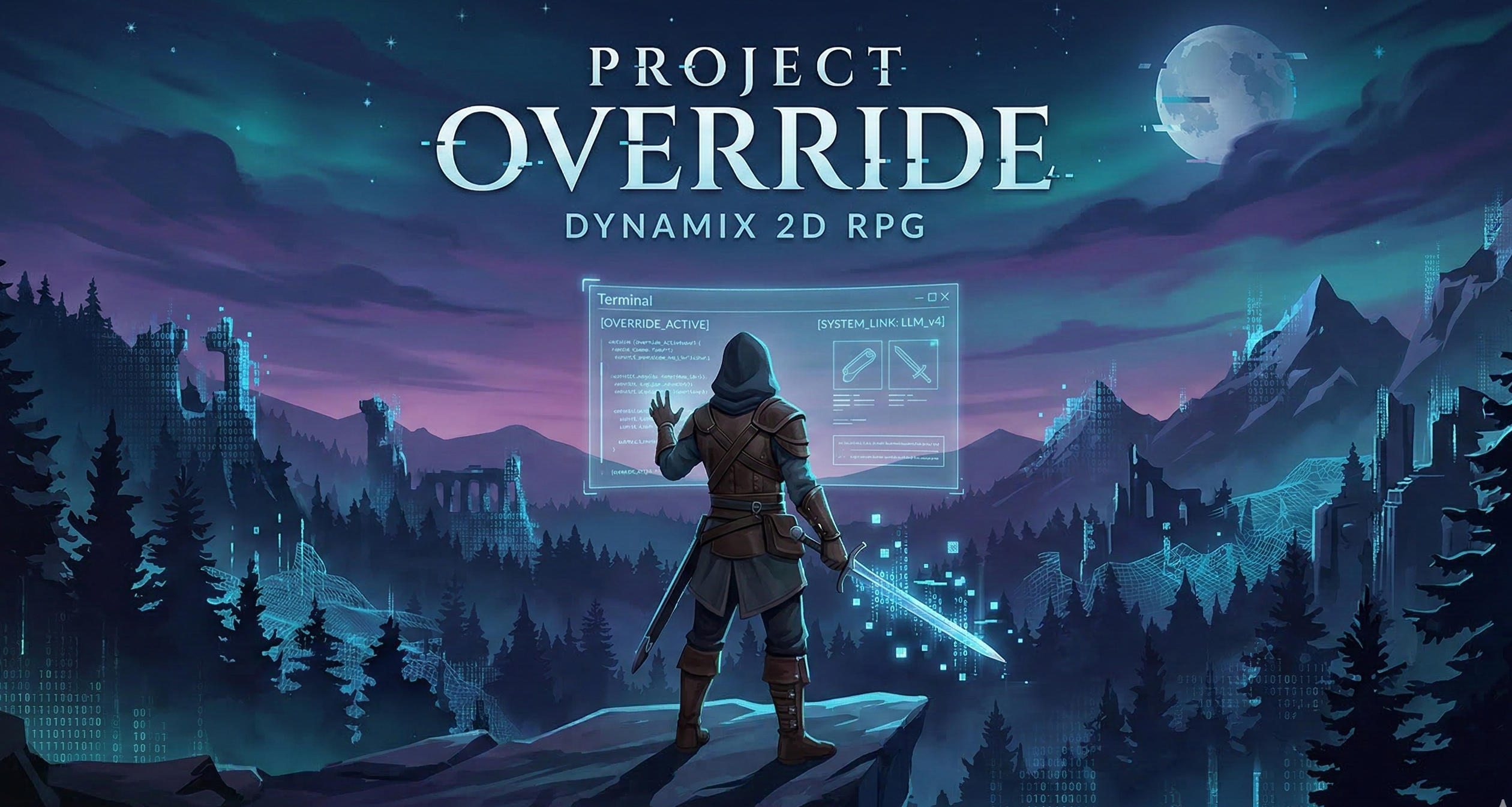

Project Override: A True Dynamic RPG

The Inspiration: Escaping Deterministic Loops

Watching anime like Sword Art Online highlights a glaring gap in modern RPGs: the lack of truly dynamic, self-generating environments. While procedural generation exists, it relies on static assets and rigid rules. The goal of Project Override is to build a game where the algorithm and world develop organically as the player interacts with it, using generative AI not just for dialogue, but for core state management and item creation.

The Core Concept

Project Override masquerades as a traditional, deterministic 2D top-down RPG. The game plays normally until the player encounters an impossible scenario. The game "glitches," and grants the player access to a command-line Terminal.

This Terminal allows players to input natural language prompts to manipulate the game’s reality—altering stats, changing NPC loyalties, spawning items, or rewriting quest conditions. It merges the structured progression of a classic RPG with the sandbox potential of prompt engineering.

To prevent infinite exploitation, Terminal usage requires an in-game resource (e.g., "Glitch Tokens"). Players must engage with the traditional combat/exploration loop to earn the ability to hack the world.

How the Mechanic Naturally Evolves (Scenarios)

The transition from a cozy RPG to a terminal-driven meta-game needs to feel earned and necessary.

Act 1: The Forced Hack: You enter the first dungeon and face a boss with impossibly scaled stats (Level 999, instant-kill attacks). The UI tears, and an unknown user opens a chat prompt: "You can't beat this with math. Rewrite it." You type:

"Make the boss allergic to its own weapon."The Terminal parses this, reduces the boss's HP to 1, and the game resumes.Act 2: Bypassing the Grind: A bridge is guarded by a Troll demanding 10,000 gold. Instead of grinding for 10 hours, you open the Terminal:

"Tell the troll I am a tax inspector and he is being audited."The LLM generates terrified dialogue, the troll flees, and your inventory delta adds 500 gold (the troll's bribe).Act 3: Combat Hacking: During a fight with ice slimes, you prompt:

"Turn the dungeon floor into a giant frying pan."The environment sprites tint red, and a continuous DoT (Damage over Time)health_deltais applied to all water/ice-based entities.

Technical Architecture: Cloud-First & Caching

Relying entirely on live LLM inference for every player action introduces latency and massive API costs. The core engine solves this using a high-speed caching layer.

1. Vector Search and Redis Lookups

When a player inputs a command, we avoid hitting the LLM if a similar action has been requested before.

The Pipeline: The player types

"give me a flaming sword". The string is embedded and queried against a vector database (like Pinecone).Cache Hit: If semantic similarity is >0.95 (e.g., matching a past prompt like "create a fire sword"), the system does a fast Redis lookup to fetch the pre-calculated JSON state deltas. Latency remains under 100ms.

Cache Miss: The prompt is sent to the LLM, evaluated, and the resulting JSON and embedding are stored in the database for future players.

2. Forging New Items (Text + Image Generation)

When a player invents a completely novel item, the game dynamically generates it. If a player prompts "Craft a shield made of frozen time", the LLM calculates the stat deltas (e.g., +50 Defense, applies 'Slow' debuff to attackers) and generates an image generation prompt. An API (like DALL-E 3 or Midjourney) generates the 2D sprite asset on the fly, strips the background, and saves the asset URL to the database alongside the new item's JSON data.

3. The Engine-to-AI Bridge (JSON Schema)

The LLM is restricted to outputting a strict JSON schema. The game engine pauses the physics loop, waits for the JSON, parses the deltas, updates the UI, and unpauses.

JSON

{

"dialogue_update": "Wait, I didn't know you were the tax inspector!",

"entity_target": "troll_guard_01",

"action": "flee",

"deltas": {

"target_health": 0,

"inventory_changes": [

{"item_id": "gold", "amount": 500}

]

}

}

The Alternative: On-Device AI Optimization

If cloud API costs scale too aggressively, the architecture can pivot to a local inference model.

Using a Teacher-Student distillation pipeline, a massive model (like GPT-4o) generates tens of thousands of synthetic game interactions offline based on the JSON schema. An open-weights Small Language Model (SLM) in the 2B-8B parameter range is fine-tuned exclusively on this dataset.

This model is quantized to 4-bit precision (GGUF format, ~2GB size) and embedded directly into the game client. This shifts the compute cost entirely to the user's local VRAM, achieving zero-latency, offline gameplay at the cost of static game updates.